Preliminaries. Given a finite set $\Sigma$ and a binary relation $f:\Sigma^* \to \mathcal P(\Sigma^*)$, we would use $F$# to denote the set function $\mathcal P(\Sigma^*) \to \mathcal P(\Sigma^*)$ defined by $F(S):= \bigcup_{v\in S}f(v).$ Also,$$F^0(S):= S;\quad F^{n+1}(S):= F(F^n(S))\cup F^n(S);\quad F^*(S):= \bigcup\nolimits_{n\ge 0} F^n(S).$$Intuitively, $F^*(S)$ is the set reachable from $S$ by applying $f$ to $S$

finitely many times. A binary relation is called

regular if it can be recognised by a finite automaton over alphabet $(\Sigma\cup\{\epsilon\})\times(\Sigma\cup\{\epsilon\}).$

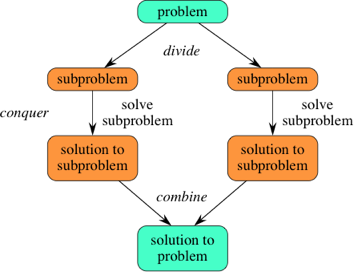

Unreachability analysis. Suppose $I$ and $B$ are two regular sets and $f$ is a regular relation. The

unreachability problem $(I,F,B)$ asks whether $B$ is unreachable from $I$ through $F$, namely, $F^*(I)\cap B=\emptyset$. In software verification, many safety properties of a software can be modelled as (un)reachability problems [1] for an infinite state system. Particularly, the semantics of a program is modelled by a binary relation over configurations $\Sigma^*.$ If we let $I$ and $B$ denote the sets of the initial and the bad configurations, respectively, then $F^*(I)\cap B=\emptyset$ means that the execution of the program would never go wrong.

Tractability. Given $(I,F,B)$, $F^*(I)$ is not necessarily regular even though $F^n(S)$ is regular for all $n\ge0.$ Also, most interesting properties about $F^*(S)$ are undecidable. This fact is not surprising as the transition relation of a Turing Machine is regular*. To make the verification problem more tractable, we assume that $f$ is

length-preserving, i.e., $w\in f(u)$ only if $|w|=|u|$, which weakens the expressive power of the transition system $(I,f)$ from a Turing machine to a

linear-bounded automaton (LBA), which is only allowed to work with the amount of space occupied by the input string. Membership of $F^*(I)$ becomes decidable with the length-preserving restriction, but unreachability remains undecidable, as $LBA\cap DFA=LBA$ and checking emptiness of LBA is undecidable. This follows from the fact one can encode a given TM $T$ as an LBA $A$ such that $L(A)$ consists of precisely the halting runs of $T$.

Remark. While a regular transition system is as powerful as a TM (which can compute functions like $f(w)=w\#w$), not all transition systems are regular. For example, transition systems induced by higher-order pushdown automata allow transitions like $w \to w\#w$ and are thus not regular.

Regular proof synthesis. While the problem is undecidable, we can given a positive answer to it as long as we can find a

proof . Such a proof can be a set $S$, called an

inductive invariant, that satisfies $B\cap S=\emptyset$ and $F^*(I)\subseteq S.$ Recall that in

this post we introduced L*, an algorithm that can learn a DFA by querying the target language. Below, we try to construct a teacher for L* to learn a

regular proof for unreachability problem $(I,F,B).$ Upon a membership queries for word $w$, the teacher checks if $I$ is reachable from $w$ through $f^{-1}$. This check is decidable thanks to the assumption that $f$ is length-preserving, which guarantees that a word is only reachable from a finite number of words. Upon an equivalence query for DFA $A$, the teacher performs the following 4 steps in turn:

Step 1. If $\exists w\in I\setminus L(A)$ then return $w$. // check that $I\subseteq L(A)$)

Step 2. If $\exists w\in L(A)\cap B$ then halt and report "reachable" if $Mem(u)=yes$; return $w$ otherwise. // check that $L(A)$ doesn't overlap $B$

Step 3. If there exist $u\in L(A)$ and $w\in f(u)\setminus L(A)$, then return $w$ if $Mem(u)=yes$ and return $u$ otherwise. // check that $L(A)$ is inductive

Step 4. Halt and report "unreachable".

If a candidate DFA $A$ passes Step 1-3, then $L(A)$ is clearly an inductive invariant. Observe that each counterexample falls in the symmetric difference of $L(A)$ and $F^*(I).$ When $F^*(I)$ is regular, it follows from the complexity results of L* that an inductive invariant can be learnt with at most $n$ equivalence queries, where $n$ is the number of states in the minimal DFA of $F^*(I)$, and at most $m\!\cdot\!(n+1)$ membership queries, where $m$ is an upper-bound on the length of the counter-examples returned by the teacher. Here we have several notes in order:

On the length-preserving restriction. We have assumed that the transition relation preserve lengths. In this way, a bad configuration is reachable from some initial configuration if and only if it can be reached within a bounded memory. This restriction is not essential for practical unreachability analysis as long as we allow "padding" in the initial set. That is, for every word in the initial configurations, say $w$, we would pad it with an arbitrary number of placeholders, say $\bot$, so that $w\in I$ implies $w\bot^*\subseteq I$. In this way, the size of available memory for computing $w$ is finite but effectively unbounded. On the other hand, though length-preserving relations are powerful enough to model many useful transition systems, they cannot model systems that require $\epsilon$ in their alphabet, such as lossy channel machines.

Minimality of proof. While L* always learns a minimal DFA by the Myhill-Nerode theorem, here it does not necessarily learn the minimal inductive invariant in terms of set inclusion. Instead, depending on the counterexamples returned by the teacher, L* would try to learn one of the inductive invariants and, when it found one, it learns the minimal DFA for that particular invariant.

Completeness of the method. When $F^*(I)$ is regular, L* is guaranteed to find a proof. When $F^*(I)$ is not regular, however, our method may not find a regular proof even when one exists. On the other hand, checking unreachability is in fact decidable when a regular proof exists, since we can launch two procedures at the same time, one looking for a counterexample and one looking for a proof. From this aspect, L* learning is weaker in completeness than brute force enumeration. (Note that finding a DFA for $F^*(I)$ may still be undecidable even when $F^*(I)$ is regular.)

A non-terminating example. Consider an unreachability problem $(I,F,B)$ where $I:=\{(ab)^n:n\ge 1\}$, $f:= \{(xbay, xaby): x,y\in \{a,b\}^*\}$, and $B$ is some trivial set such as $\emptyset.$ Note that $F^*(I)$ is not regular, since $F^*(I)\cap a^*b^*=\{a^nb^n:n\ge1\}$ is not regular. Now consider a Teacher implementing Step 1-4 for equivalence queries that would return a

shortest counterexample for the candidate proof. Below we list the queries and the automata $A$ learnt by L* in each iteration.

Iteration 1: $A=\emptyset$; containment check of $I$ fails with counterexample $ab$; add $ab.$

Iteration 3: $A=a(ba)^*b$; inductiveness check fails at $abab \to aabb$; add $aabb.$

digraph finite_state_machine {

rankdir=LR;

size="8,5"

node [shape = doublecircle]; 3 ;

node [shape = circle];

1 -> 2 [ label = "a" ];

2 -> 3 [ label = "b" ];

3 -> 2 [ label = "a" ];

"init" [shape = point];

"init" -> 1;

}

Iteration 4: $A=a(ba|ab)^*b$; inductiveness check fails at $aababb \to aaabbb$; add $aaabbb.$

digraph finite_state_machine {

rankdir=LR;

size="8,5"

node [shape = doublecircle]; 4 ;

node [shape = circle];

2 -> 3 [ label = "b" ];

1 -> 3 [ label = "a" ];

3 -> 2 [ label = "a" ];

3 -> 4 [ label = "b" ];

4 -> 3 [ label = "a" ];

"init" [shape = point];

"init" -> 1;

}

Iteration 5: $A=a(ba|a(ab)^*b)^*b$; inductiveness check fails at $aaababbb \to aaaabbbb$; add $aaaabbbb.$

digraph finite_state_machine {

rankdir=LR;

size="8,5"

node [shape = doublecircle]; 5 ;

node [shape = circle];

2 -> 3 [ label = "b" ];

3 -> 2 [ label = "a" ];

3 -> 4 [ label = "b" ];

1 -> 4 [ label = "a" ];

4 -> 3 [ label = "a" ];

4 -> 5 [ label = "b" ];

5 -> 4 [ label = "a" ];

"init" [shape = point];

"init" -> 1;

}

One can observe that L* got trapped from the 3rd iteration - it keeps splitting state 2, creating a lineage that leads to a divergent sequence of counterexamples. On the other hand, L* has indeed tried its best after the 3rd iteration: each time L* receives a counterexample of length $2n$, it succeeds to find an inductive proof for the reachable configurations of length $\le 2n$, namely $A_n:=\{w\in \{a,b\}^*:|w|\le n$ and $0\le$#$a-$#$b\le n$ in all prefixes of $w \}.$ Note that $|A_{n+1}|=|A_{n}|+1.$ Due to the fact that L* progresses incrementally in terms of automata size, and that $a^{n+1}b^{n+1}$ is the shortest counter-example for $A_n$, L* will never reach an automaton covering all possible $A_n$.

Learning with witnesses. We have discussed how to use the L* learning algorithm to prove $F^*(I)\cap B=\emptyset$. In order to answer membership queries, we assumed that the transition relation is length-preserving. However, it is possible to use L* without this assumption. For example, Vardhan

et al. [3] proposed to learn a set of configuration-witness pairs as a proof, where a

witness refers to the number of steps needed to reach a configuration. More precisely, the proof they proposed to learn is a

regular subset of $((\Sigma\cup\{\bot\})\times\{0,1\})^*$, where $\bot$ serves as a padding symbol. Each word in this set encodes a pair $(c,n)$, meaning that $c$ is a configuration contained in $F^n(I)$. Let $[c,n]$ denote the encoding of $(c,n).$ Given $I$, $f$, and $B$, we define $I'$, $f'$, and $B'$ as

$\begin{eqnarray*}

I' & := & \{[c,0]: c\in I\};\\

f'(S) &:=& \{[f(c),n+1]: [c,n]\in S\};\\

B' &:= & \{[c,n]:c\in B, n\ge 0\}.

\end{eqnarray*}$

Note that if $I$, $f$, and $B$ are regular then so are $I'$, $f'$, and $B'.$

It is straightforward to learn a proof for unreachability problem $(I',F',B')$ using L*: membership query is decidable thanks to the presence of witness, and equivalence query can be answered as we did before, aiming to find an inductive invariant as an over-approximation. After L* learns a proof for the new problem, we obtain a proof for the original problem by projection. However, note that the transformation from configurations to configuration-witness pairs doesn't preserve regularity. Hence, the transformed problem $(I',F',B')$ doesn't necessarily have a regular proof even when the original problem $(I,F,B)$ does. In particular, unlike the previous method, the learning process here doesn't guarantee to terminate when $F^*(I)$ is regular. It would be interesting to characterise the classes for which this technique is effective, namely, the classes where regularity of $F^*(I)$ implies that of $F'^*(I')$.

References

1. A unified approach for studying the properties of transitions systems, TCS 1982.

2. Learning to prove safety over parameterised concurrent systems, FMCAD 2017.

3. Learning to verify safety properties, ICFME 2004.